|

| By Laurent Itti | itti@usc.edu | http://jevois.org | GPL v3 |

| |||

| Video Mapping: NONE 0 0 0.0 YUYV 320 240 2.1 JeVois DarknetSingle | |||

| Video Mapping: YUYV 544 240 15.0 YUYV 320 240 15.0 JeVois DarknetSingle | |||

| Video Mapping: YUYV 448 240 15.0 YUYV 320 240 15.0 JeVois DarknetSingle |

Module Documentation

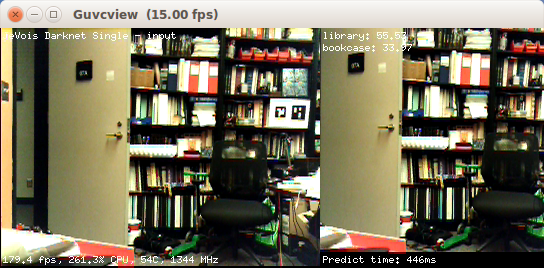

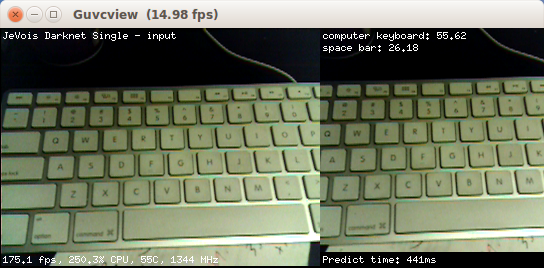

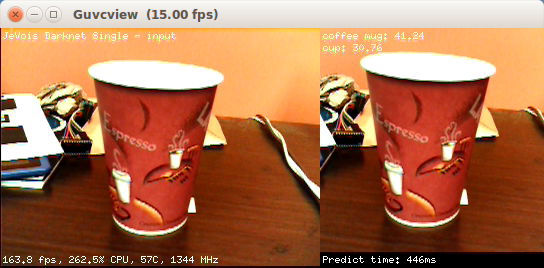

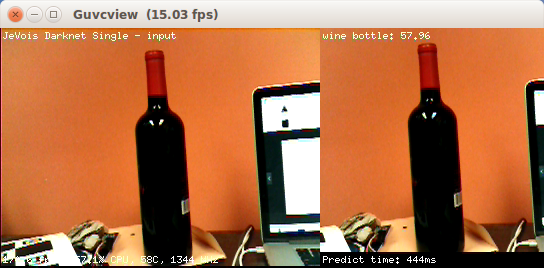

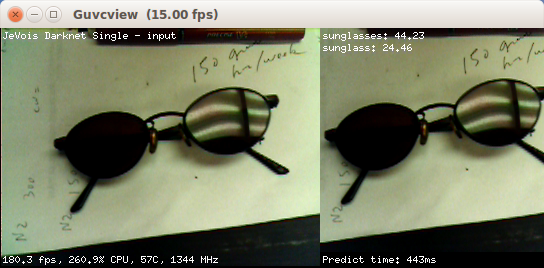

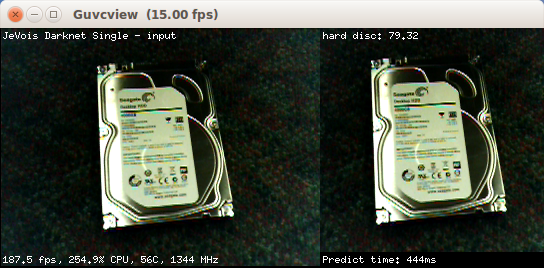

Darknet is a popular neural network framework. This module identifies the object in a square region in the center of the camera field of view using a deep convolutional neural network.

The deep network analyzes the image by filtering it using many different filter kernels, and several stacked passes (network layers). This essentially amounts to detecting the presence of both simple and complex parts of known objects in the image (e.g., from detecting edges in lower layers of the network to detecting car wheels or even whole cars in higher layers). The last layer of the network is reduced to a vector with one entry per known kind of object (object class). This module returns the class names of the top scoring candidates in the output vector, if any have scored above a minimum confidence threshold. When nothing is recognized with sufficiently high confidence, there is no output.

Darknet is a great alternative to popular neural network frameworks like Caffe, TensorFlow, MxNet, pyTorch, Theano, etc as it features: 1) small footprint which is great for small embedded systems; 2) hardware acceleration using ARM NEON instructions; 3) support for large GPUs when compiled on expensive servers, which is useful to train the neural networks on big servers, then copying the trained weights directly to JeVois for use with live video.

See https://pjreddie.com/darknet for more details about darknet.

This module runs a Darknet network and shows the top-scoring results. The network is currently a bit slow, hence it is only run once in a while. Point your camera towards some interesting object, make the object fit in the picture shown at right (which will be fed to the neural network), keep it stable, and wait for Darknet to tell you what it found. The framerate figures shown at the bottom left of the display reflect the speed at which each new video frame from the camera is processed, but in this module this just amounts to converting the image to RGB, sending it to the neural network for processing in a separate thread, and creating the demo display. Actual network inference speed (time taken to compute the predictions on one image) is shown at the bottom right. See below for how to trade-off speed and accuracy.

Note that by default this module runs the Imagenet1k tiny Darknet (it can also run the slightly slower but a bit more accurate Darknet Reference network; see parameters). There are 1000 different kinds of objects (object classes) that these networks can recognize (too long to list here). The input layer of these two networks is 224x224 pixels by default. This modules takes a crop at the center of the video image, with size determined by the network input size. With the default network parameters, this module hence requires at least 320x240 camera sensor resolution. The networks provided on the JeVois microSD image have been trained on large clusters of GPUs, typically using 1.2 million training images from the ImageNet dataset.

Sometimes this module will make mistakes! The performance of darknet-tiny is about 58.7% correct (mean average precision) on the test set, and Darknet Reference is about 61.1% correct on the test set, using the default 224x224 network input layer size.

Neural network size and speed

When using a video mapping with USB output, the network is automatically resized to a square size that is the difference between the USB output video width and the camera sensor input width (e.g., when USB video mode is 544x240 and camera sensor mode is 320x240, the network will be resized to 224x224 since 224=544-320).

The network size direcly affects both speed and accuracy. Larger networks run slower but are more accurate.

For example:

- with USB output 544x240 (network size 224x224), this module runs at about 450ms/prediction.

- with USB output 448x240 (network size 128x128), this module runs at about 180ms/prediction.

When using a videomapping with no USB output, the network is not resized (since we would not know what to resize it to). You can still change its native size by changing the network's config file, for example, change the width and height fields in JEVOIS:/share/darknet/single/cfg/tiny.cfg.

Note that network dims must always be such that they fit inside the camera input image.

Serial messages

When detections are found with confidence scores above thresh, a message containing up to top category:score pairs will be sent per video frame. Exact message format depends on the current serstyle setting and is described in Standardized serial messages formatting. For example, when serstyle is Detail, this module sends:

DO category:score category:score ... category:score

where category is a category name (from namefile) and score is the confidence score from 0.0 to 100.0 that this category was recognized. The pairs are in order of decreasing score.

See Standardized serial messages formatting for more on standardized serial messages, and Helper functions to convert coordinates from camera resolution to standardized for more info on standardized coordinates.

| Parameter | Type | Description | Default | Valid Values |

|---|---|---|---|---|

| (Darknet) netw | Net | Network to load. This meta-parameter sets parameters dataroot, datacfg, cfgfile, weightfile, and namefile for the chosen network. | Net::Tiny | Net_Values |

| (Darknet) dataroot | std::string | Root path for data, config, and weight files. If empty, use the module's path. | JEVOIS_SHARE_PATH /darknet/single | - |

| (Darknet) datacfg | std::string | Data configuration file (if relative, relative to dataroot) | cfg/imagenet1k.data | - |

| (Darknet) cfgfile | std::string | Network configuration file (if relative, relative to dataroot) | cfg/tiny.cfg | - |

| (Darknet) weightfile | std::string | Network weights file (if relative, relative to dataroot) | weights/tiny.weights | - |

| (Darknet) namefile | std::string | Category names file, or empty to fetch it from the network config file (if relative, relative to dataroot) | - | |

| (Darknet) top | unsigned int | Max number of top-scoring predictions that score above thresh to return | 5 | - |

| (Darknet) thresh | float | Threshold (in percent confidence) above which predictions will be reported | 20.0F | jevois::Range<float>(0.0F, 100.0F) |

| (Darknet) threads | int | Number of parallel computation threads | 6 | jevois::Range<int>(1, 1024) |

| Detailed docs: | DarknetSingle |

|---|---|

| Copyright: | Copyright (C) 2017 by Laurent Itti, iLab and the University of Southern California |

| License: | GPL v3 |

| Distribution: | Unrestricted |

| Restrictions: | None |

| Support URL: | http://jevois.org/doc |

| Other URL: | http://iLab.usc.edu |

| Address: | University of Southern California, HNB-07A, 3641 Watt Way, Los Angeles, CA 90089-2520, USA |