|

| By Laurent Itti | itti@usc.edu | http://jevois.org | GPL v3 |

| |||

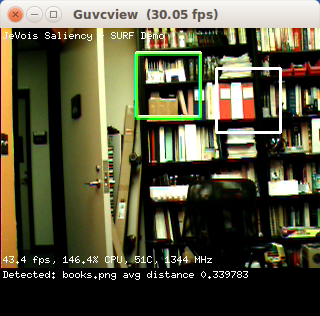

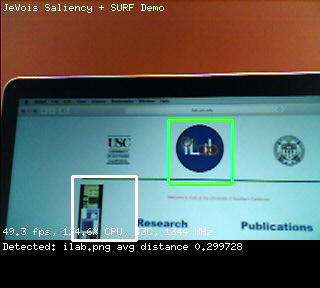

| Video Mapping: YUYV 320 288 30.0 YUYV 320 240 30.0 JeVois SaliencySURF |

Module Documentation

This module finds objects by matching keypoint descriptors between a current set of salient regions and a set of training images.

Here we use SURF keypoints and descriptors as provided by OpenCV. The algorithm is quite slow and consists of 3 phases:

- detect keypoint locations,

- compute keypoint descriptors,

- and match descriptors from current image to training image descriptors.

Here, we alternate between computing keypoints and descriptors on one frame (or more, depending on how slow that gets), and doing the matching on the next frame. This module also provides an example of letting some computation happen even after we exit the process() function. Here, we keep detecting keypoints and computing descriptors even outside process().

Also see the ObjectDetect module for a related algorithm (without attention).

Training

Simply add images of the objects you want to detect into JEVOIS:/modules/JeVois/SaliencySURF/images/ on your JeVois microSD card.

Those will be processed when the module starts.

The names of recognized objects returned by this module are simply the file names of the pictures you have added in that directory. No additional training procedure is needed.

Beware that the more images you add, the slower the algorithm will run, and the higher your chances of confusions among several of your objects.

This module provides parameters that allow you to determine how strict a match should be. With stricter matching, you may sometimes miss an object (i.e., it was there, but was not detected by the algorithm). With looser matching, you may get more false alarms (i.e., there was something else in the camera's view, but it was matched as one of your objects). If you are experiencing difficulties getting any matches, try to loosen the settings, for example:

setpar goodpts 5 ... 100 setpar distthresh 0.5

| Parameter | Type | Description | Default | Valid Values |

|---|---|---|---|---|

| (SaliencySURF) inhsigma | float | Sigma (pixels) used for inhibition of return | 32.0F | - |

| (SaliencySURF) regions | size_t | Number of salient regions | 2 | - |

| (SaliencySURF) rsiz | size_t | Width and height (pixels) of salient regions | 64 | - |

| (SaliencySURF) save | bool | Save regions when true, useful to create a training set. They will be saved to /jevois/data/saliencysurf/ | false | - |

| (ObjectMatcher) hessian | double | Hessian threshold | 800.0 | - |

| (ObjectMatcher) traindir | std::string | Directory where training images are | images | - |

| (ObjectMatcher) goodpts | jevois::Range<size_t> | Number range of good matches considered | jevois::Range<size_t>(15, 100) | - |

| (ObjectMatcher) distthresh | double | Maximum distance for a match to be considered good | 0.2 | - |

| (Saliency) cweight | byte | Color channel weight | 255 | - |

| (Saliency) iweight | byte | Intensity channel weight | 255 | - |

| (Saliency) oweight | byte | Orientation channel weight | 255 | - |

| (Saliency) fweight | byte | Flicker channel weight | 255 | - |

| (Saliency) mweight | byte | Motion channel weight | 255 | - |

| (Saliency) centermin | size_t | Lowest (finest) of the 3 center scales | 2 | - |

| (Saliency) deltamin | size_t | Lowest (finest) of the 2 center-surround delta scales | 3 | - |

| (Saliency) smscale | size_t | Scale of the saliency map | 4 | - |

| (Saliency) mthresh | byte | Motion threshold | 0 | - |

| (Saliency) fthresh | byte | Flicker threshold | 0 | - |

| (Saliency) msflick | bool | Use multiscale flicker computation | false | - |

params.cfg file# Default parameters that are set upon loading the module goodpts = 4...15 distthresh = 0.4 |

| Detailed docs: | SaliencySURF |

|---|---|

| Copyright: | Copyright (C) 2016 by Laurent Itti, iLab and the University of Southern California |

| License: | GPL v3 |

| Distribution: | Unrestricted |

| Restrictions: | None |

| Support URL: | http://jevois.org/doc |

| Other URL: | http://iLab.usc.edu |

| Address: | University of Southern California, HNB-07A, 3641 Watt Way, Los Angeles, CA 90089-2520, USA |